The Human and Agent Org Chart

Designing roles, quality, and accountability for scale

By Shelley Holm

This article is part of Forum Solutions’ From Prediction to Adaptation series on AI strategy and enablement as an operating model transformation.

Key Takeaways:

Agents must be designed into the operating model, not bolted onto workflows.

Accountability must be explicit, including agent escalation and override rules.

Trust, quality, and safety must be design requirements.

As AI creates new capacity, many organizations respond by trying to add AI the same way they added software: purchasing licenses, training users, and hoping adoption spreads. That approach fails because agents are not tools. Agents behave more like a new category of labor. They are fast, tireless, pattern-driven, and capable of acting at scale, while also prone to confident errors without clear boundaries.

The inflection point comes when leaders stop asking how people should use AI and start asking how AI should participate in the organization.

Treating AI agents as participants forces clarity. Leaders must decide what work belongs where, what quality means in practice, and who remains accountable when agents act on the organization’s behalf.

Organizational design

Designing a human-and-agent organization requires deliberate choices about structure, decision rights, and accountability. Trust, accountability, and agility cannot be left to culture. They must be designed into the operating model.

Research published in California Management Review shows that effective human-AI organizations treat trust, accountability, and agility as design requirements, not aspirational values [1]. Most organizations underestimate how much discipline this level of design requires. Moving from pilots to scale demands a formal AI operating model and repeatable processes for introducing, governing, and retiring agents as needs change.

Without this rigor, gaps in roles and responsibilities remain implicit until something breaks. At scale, those gaps surface quickly and expensively.

Designing for what humans do best

Design is not only about where agents fit. It is also about where they do not.

MIT Sloan research highlights five uniquely human capabilities that remain essential as AI scales: empathy, presence, opinion and judgment, creativity, and hope [2]. A well-designed organization deliberately reserves this work for humans while assigning agents the tasks they consistently outperform humans at:

Repetitive, time-consuming execution

Pattern recognition across large data sets

Consistency-driven analysis and synthesis

Rapid drafting, summarization, and classification

The goal is to stop wasting human judgment, creativity, and relational capacity on work that does not require it.

What a scalable human and agent organization includes

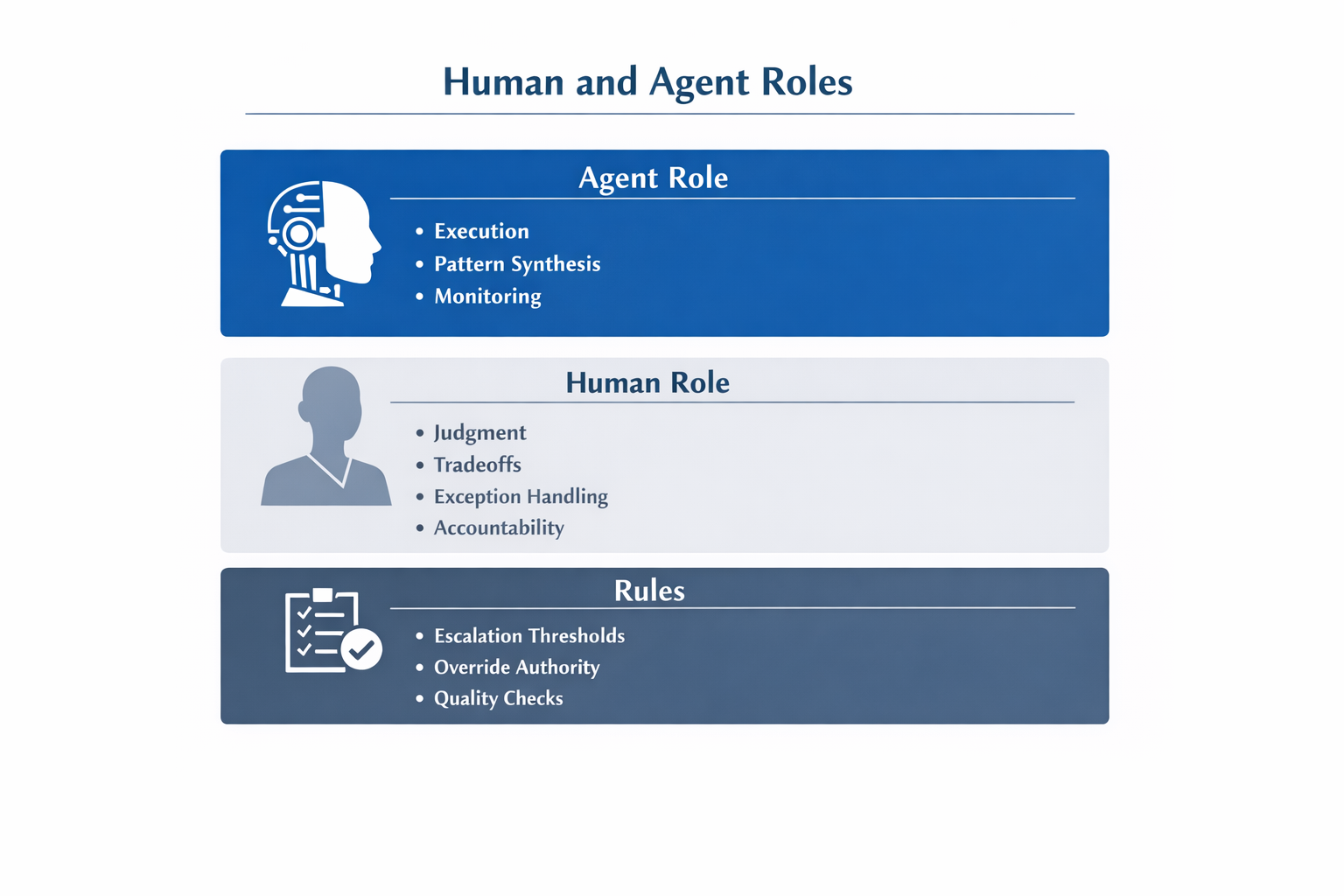

A scalable human and agent organization makes design choices explicit:

Agent roles with clear boundaries and decision scope

Quality standards and safety constraints embedded by design

Named human owners accountable for outcomes, not activity

Escalation and override rules that preserve human judgment

Feedback loops that continuously improve agent performance

When AI agents are treated as part of the organization rather than tools on the side, leaders gain leverage without surrendering control. Capacity expands, decision quality improves, and human work becomes more meaningful rather than more compressed.

Designing a human-and-agent organization creates leverage but leverage alone is not enough. As AI accelerates pace and expands scope, the cognitive demands on people increase. Without deliberate attention to how humans experience work, even well-designed systems degrade over time.

The final article in this series explores how organizations sustain judgment, creativity, and decision quality as AI becomes embedded in everyday operations by building change capability and protecting how humans think while working.

About Forum Solutions

Forum Solutions works with executives to translate that choice into practice. Through strategy, operating model design, governance, and change enablement, we help organizations move beyond experimentation to sustained impact while protecting the human judgment AI ultimately depends on.

About the Author

Shelley Holm is Co‑Founder and Managing Director at Forum Solutions, where she works with executives to move AI from experimentation to enterprise impact through strategy, operating model redesign, and transformation at scale.

Footnotes

Herman Vantrappen, “Designing a fluid organization of humans and AI agents,” California Management Review, October 9, 2025, https://cmr.berkeley.edu/2025/10/designing-a-fluid-organization-of-humans-and-ai-agents/.

Thomas W. Malone, “Building human-centered AI systems,” MIT Sloan Management Review, 2018; see also MIT Sloan Management Review, “What humans still do better than AI,” on the EPOCH framework.