Strategy Requires Saying No: Governing AI for Focus, Judgment, and Scale

Why disciplined prioritization and decision design determine whether AI creates value or noise

By Shelley Holm

This article is part of Forum Solutions’ From Prediction to Adaptation series on AI strategy and enablement as an operating model transformation.

Key takeaways:

AI lowers the cost of action, so focus becomes the constraint

Governance clarifies decision rights, review thresholds, and accountability

Strategy becomes operational when priorities, sequencing, and adoption are designed together

As AI capabilities expand and new tools surface with increasing frequency, leaders face mounting pressure to act broadly and quickly rather than deliberately. The organizations realizing sustained value from AI are not the ones doing the most. They are the ones making deliberate choices about what matters now, what can wait, and what should never be automated at all.

AI adoption is not constrained by technology. It is constrained by leadership focus. When everything is a priority, attention becomes scarce. Without clear strategy and sequencing, organizations generate more activity without improving outcomes. Teams stay busy rather than effective, and decision quality declines as increased activities compete for attention.

Why AI demands a different strategic lens

Deploying AI is fundamentally different from deploying traditional software. Software implementations are typically stabilized and then left largely unchanged until the next upgrade. AI does not behave this way. It evolves continuously and, in doing so, reshapes how work is done, how decisions are made, and how accountability is distributed.

Treating AI as a one‑time deployment is a common and costly mistake. It leads organizations to underestimate the ongoing change required and overestimate the durability of early design choices. An effective AI strategy therefore cannot focus only on what is deployed today. It must be anchored in how the organization expects to evolve over time.

That requires deliberate decisions about which activities should be automated, which should be augmented, and which must remain human‑led. These are not technical distinctions. They are strategic ones.

From experimentation to focus

Early experimentation is valuable. Staying in perpetual pilot mode is not. When experimentation is not followed by prioritization, organizations fragment. Results become inconsistent. Fatigue sets in.

Leaders translate opportunity into focus by asking practical questions:

Which decisions or workflows materially affect outcomes today

Where data quality and organizational readiness support adoption

Which use cases align with enterprise priorities rather than individual enthusiasm

This approach narrows the field intentionally. It prevents the common failure mode of deploying AI everywhere and improving nothing meaningfully.

Prioritization also creates momentum. When early efforts are sequenced around impact, results become visible. Confidence grows. Adoption accelerates because value is visible and experienced.

Governance as an enabler, not a constraint

As AI begins to influence decisions rather than simply execute tasks, governance becomes indispensable. AI is not merely a productivity tool. It drafts, recommends, classifies, and in some cases acts. Without clear boundaries, accountability blurs.

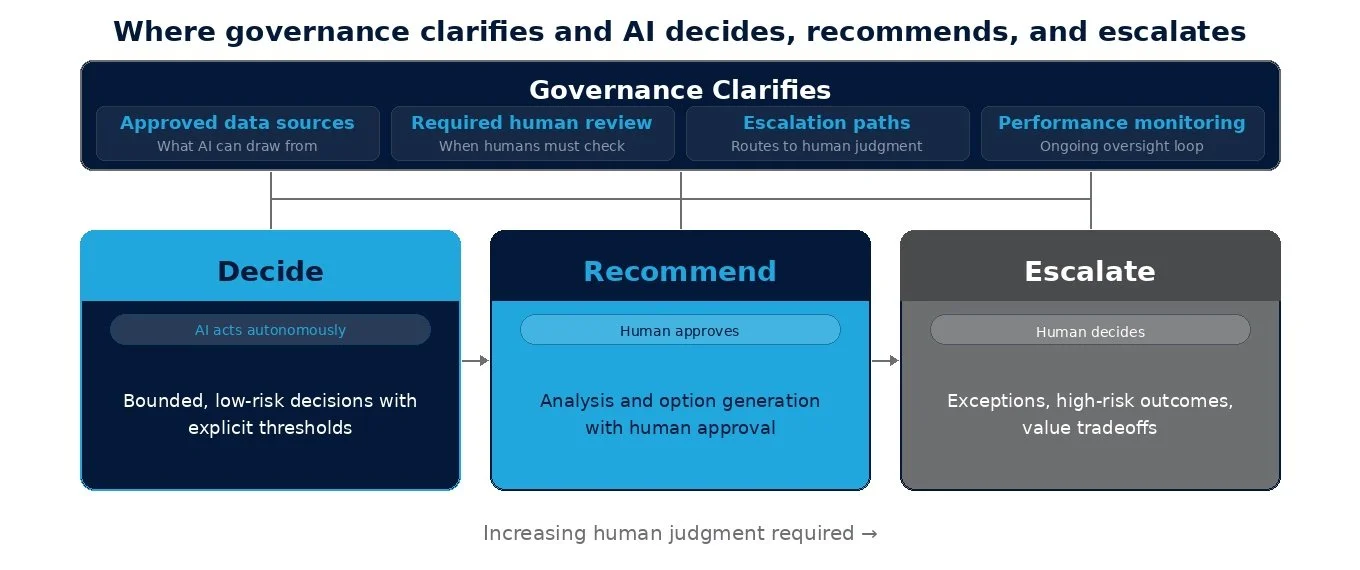

Effective governance answers practical questions before scale introduces risk:

Where AI is allowed to decide, where it may recommend, and where humans must lead

Which outputs require human review

How exceptions are escalated

Who owns outcomes when humans and AI collaborate

When these rules are explicit, teams move faster with confidence. When they are not, progress slows as uncertainty grows.

Governance also protects judgment. AI systems are optimized for patterns. They do not understand context, intent, or consequence the way humans do. Clear delineation of responsibility ensures that AI accelerates analysis while humans retain authority over decisions that carry material risk, nuance, or long-term impact.

Designing for human creativity and strategic thinking

The goal of AI strategy is not to replace human thinking. It is to protect it. When AI absorbs repetitive, time-consuming, and pattern-driven work, it creates space for people to focus on reframing problems, imagining new possibilities, navigating tradeoffs, and exercising judgment under uncertainty. This balance does not happen organically. It requires intentional workflow design and explicit expectations for how AI participates in the work. Without this clarity, human creativity is crowded out by constant review and oversight, and the promise of AI quickly gives way to cognitive overload.

The leadership obligation

AI creates leverage, but leverage without direction magnifies noise. In an environment where action becomes cheap, strategy is the discipline of deciding where not to act.

Leadership responsibility in this moment is not to pursue every possibility, but to choose deliberately. Organizations that treat AI as an operating model decision gain more than efficiency. They gain focus, and with it, better decisions.

When priorities are clear and governance is deliberate, AI begins to create real capacity. The next leadership question is no longer what to automate, but how that capacity should be reinvested.

The next article in this series explores that shift, examining how leaders can deliberately reinvest the AI dividend to improve decisions, enable growth, and avoid turning efficiency into pressure.

About Forum Solutions

Forum Solutions works with executives to translate that choice into practice. Through strategy, operating model design, governance, and change enablement, we help organizations move beyond experimentation to sustained impact while protecting the human judgment AI ultimately depends on.

About the Author

Shelley Holm is Co‑Founder and Managing Director at Forum Solutions, where she works with executives to move AI from experimentation to enterprise impact through strategy, operating model redesign, and transformation at scale.